From Throughput to Truth: What Kafka Taught Me About System Health

Scaling exposed something deeper than bugs—it exposed misunderstanding. From resource requests to Kafka lag, the real challenge wasn’t fixing the system, it was learning how to read it.

Problems weren’t bugs, they were misunderstandings of the platform.

There’s a point in building a distributed system where everything seems like it’s working.

Messages are flowing. Services are processing. Data is being produced.

And then you scale it.

That’s when things start to feel… unpredictable.

Not because the system is broken, but because your understanding of it is incomplete.

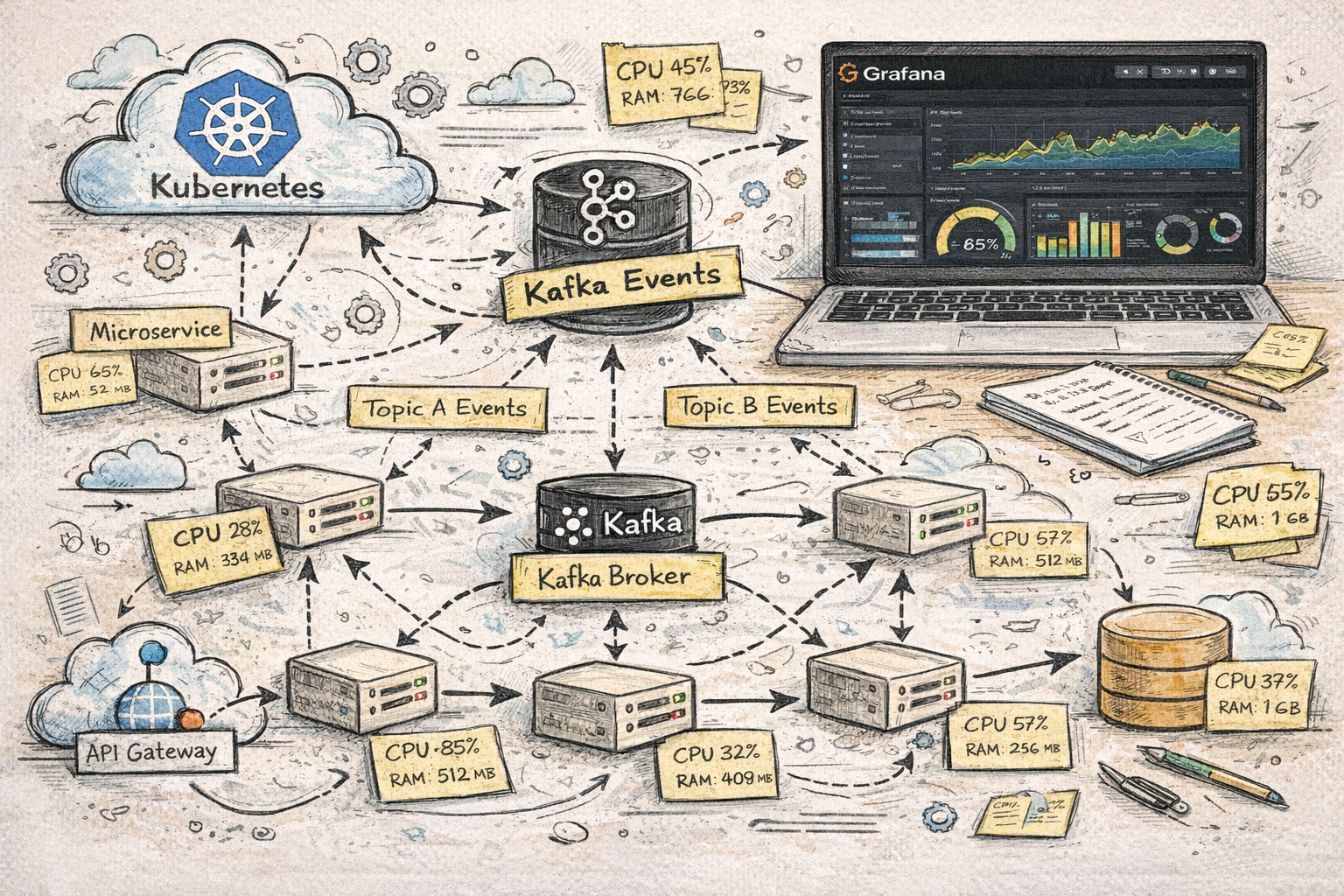

What I’ve been learning recently while working on a Kafka-based, microservices-driven platform in Kubernetes is this:

The system wasn’t lying to me.

I just didn’t yet understand what it was saying.

Requests Aren’t Minimums They’re Promises

One of the more subtle (and painful) lessons came from how we were configuring CPU and memory in Kubernetes.

At first, it felt intuitive to treat resource requests as the minimum needed to get a service running:

“If it can start with this, we’re good.”

That assumption turned out to be wrong.

We had situations where replicas were scheduled onto nodes that technically had enough available memory based on those low requests. Everything looked fine at startup. But once the service began doing real work, memory usage increased (because of course it did) and suddenly there wasn’t enough available capacity on the node.

The result: crashes. Instability. Confusion.

Kubernetes didn’t fail us, we gave it bad information.

Requests aren’t about the minimum required to start. They are signals to the scheduler about what a service actually needs to run normally. If that signal is wrong, the scheduler makes perfectly logical decisions based on bad assumptions.

The real shift was this:

Requests should reflect expected steady-state usage, not minimum viability.

Once that clicked, placement became more predictable, and stability followed.

Observability Turns Chaos into Causality

Before proper monitoring is in place, distributed systems feel chaotic.

You see CPU spikes. Memory usage fluctuates. Errors appear in logs. But everything feels disconnected.

Each issue looks like its own isolated problem.

Once you introduce proper tracing and aggregated logging, that changes completely.

You start to see:

- how a single action moves across Kafka topics via events

- how that event is picked up and processed by different services

- how work is split across multiple replicas of the same service

- how one failure propagates through the system

In a Kafka-based system, a single “action” isn’t contained in one place. It might start as a message in one topic, get transformed and published to another, and be processed by multiple services; each potentially running several replicas.

Without the ability to trace that flow end-to-end, you’re left guessing.

Aggregated logging becomes essential here. When you have multiple replicas of the same service, logs are scattered across pods. A single action might touch several of them, and without a centralized way to search across all replicas, you can’t reconstruct what actually happened.

You don’t just need logs, you need to be able to correlate them.

Without that, every failure looks isolated.

With it, you can follow a single action across topics, services, and replicas, and finally see cause and effect.

In a system built around Kafka and micro-services, this isn’t optional, it’s foundational.

A distributed system without traceability is indistinguishable from randomness.

Lag Is a Signal, Not a Symptom

For me, Kafka consumer lag is one of the most misunderstood metrics in a system like this.

The instinct is to treat lag as a failure:

“Why are we behind?”

But lag isn’t inherently bad. Lag is information.

It tells you:

- how far behind you are relative to incoming data

- whether your system is keeping up

- how much headroom you have

Lag wasn’t telling us something was broken, it was telling us how far behind reality we were.

Sometimes lag is expected. Sometimes it’s acceptable. In some cases, it’s even useful as a buffer against spikes.

The key is understanding what normal looks like.

Once you do, lag becomes one of the most powerful tools for reasoning about system performance and scaling decisions.

It’s not just a metric, it’s a signal.

Scaling Is a Result, Not a Lever

Early on, scaling feels simple:

“We need more replicas.”

But that’s not really a solution, it’s a reaction; and one that I have to fight hard.

As I spent more time understanding how the system behaved, that thinking evolved into something more precise:

“What signals should actually drive scaling?”

Scaling decisions should come from:

- consumer lag trends

- throughput versus ingestion rate

- processing time per message

Not just:

- CPU spikes

- memory pressure

- a general sense that “things look busy”

Auto-scaling is only safe when you understand what you’re scaling for.

Auto-scaling a system you don’t understand is just a faster way to make bigger mistakes.

If you don’t understand lag, you might scale unnecessarily.

If you don’t understand processing characteristics, you might over-scale or thrash.

If your bottleneck isn’t the consumer, scaling won’t help at all.

At one point, it was tempting to jump straight to auto-scaling; let Kubernetes and Kafka sort it out. But without a clear understanding of what “healthy” looked like, that would have just turned guesswork into automation.

Now that I’m starting to understand what these signals actually mean, the next step becomes much more interesting; not just scaling manually, but allowing the system to scale itself based on those signals.

Scaling replicas. Possibly even scaling nodes. But only after understanding.

Auto-scaling isn’t where you start, it’s where you arrive.

Performance Is About Feedback Loops

One of the most surprising challenges wasn’t tuning the system, it was defining what “good performance” even meant.

Most conversations start with:

- throughput

- latency

- scaling

But in a big data system, those are derived concerns.

For me, one of the most important questions became:

Is this fast enough for me to actually work with it?

If a workflow takes too long:

- I can’t iterate quickly

- I can’t debug effectively

- I lose context between runs

If I have to wait too long to see results, I’m no longer debugging, I’m guessing.

And this doesn’t just impact development, it impacts QA.

When performance lags:

- QA waits longer for results

- feedback cycles stretch

- validation windows shrink

When performance lags, QA doesn’t just slow down; it compresses. People end up verifying less, not because they want to, but because they run out of time.

That’s where risk enters the system.

Functionality gets pushed forward partially verified. Not because of negligence, but because the system didn’t allow enough time to build confidence.

A system that’s technically correct but too slow to validate is functionally broken.

This changes how you think about performance entirely.

You don’t start with Kafka. You start with people.

- How fast do results need to be available to debug effectively?

- How many iterations are required to gain confidence?

- What’s an acceptable feedback loop for developers and QA?

From there, you derive:

- acceptable processing times

- acceptable lag

- required throughput

- required scaling

Performance isn’t just about speed, it’s about feedback loops.

If your system slows down your ability to learn, it slows down your ability to deliver.

From Signals to Understanding

Each of these lessons ties back to the same underlying idea:

- Resource requests define how the system is scheduled

- Observability defines how the system is understood

- Lag defines how the system is performing

- Performance defines what actually matters

- Scaling becomes the result of all of the above

Individually, these are technical concerns.

Together, they form a system you can actually reason about.

Once you understand the signals, the system stops feeling unpredictable, and starts feeling controllable.